Hi, okay, I did it. Attached is the new firmware. Here’s some sample code:

# Single Color RGB565 Blob Tracking Example

#

# This example shows off single color RGB565 tracking using the OpenMV Cam.

import sensor, image, time, math

threshold_index = 0 # 0 for red, 1 for green, 2 for blue

# Color Tracking Thresholds (L Min, L Max, A Min, A Max, B Min, B Max)

# The below thresholds track in general red/green/blue things. You may wish to tune them...

thresholds = [(30, 100, 15, 127, 15, 127), # generic_red_thresholds

(30, 100, -64, -8, -32, 32), # generic_green_thresholds

(0, 30, 0, 64, -128, 0)] # generic_blue_thresholds

sensor.reset()

sensor.set_pixformat(sensor.RGB565)

sensor.set_framesize(sensor.QVGA)

sensor.skip_frames(time = 2000)

sensor.set_auto_gain(False) # must be turned off for color tracking

sensor.set_auto_whitebal(False) # must be turned off for color tracking

clock = time.clock()

# Only blobs that with more pixels than "pixel_threshold" and more area than "area_threshold" are

# returned by "find_blobs" below. Change "pixels_threshold" and "area_threshold" if you change the

# camera resolution. "merge=True" merges all overlapping blobs in the image.

while(True):

clock.tick()

img = sensor.snapshot()

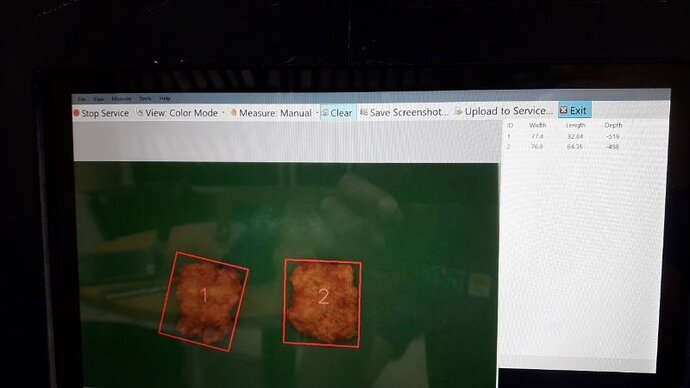

for blob in img.find_blobs([thresholds[threshold_index]], pixels_threshold=200, area_threshold=200):

img.draw_rectangle(blob.rect())

img.draw_cross(blob.cx(), blob.cy())

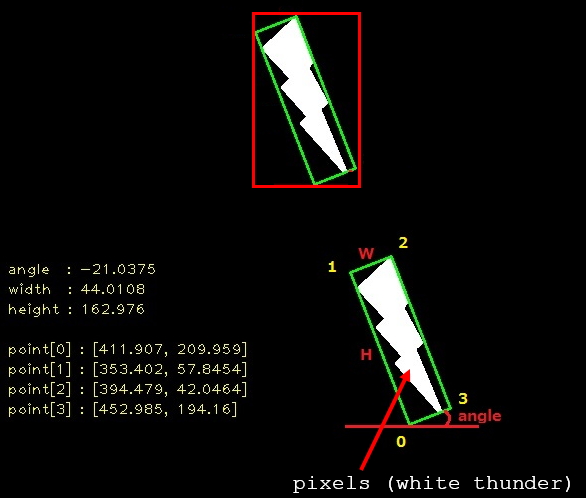

img.draw_line(blob.corners()[0][0], blob.corners()[0][1], blob.corners()[1][0], blob.corners()[1][1], color=(0,255,0))

img.draw_line(blob.corners()[1][0], blob.corners()[1][1], blob.corners()[2][0], blob.corners()[2][1], color=(0,255,0))

img.draw_line(blob.corners()[2][0], blob.corners()[2][1], blob.corners()[3][0], blob.corners()[3][1], color=(0,255,0))

img.draw_line(blob.corners()[3][0], blob.corners()[3][1], blob.corners()[0][0], blob.corners()[0][1], color=(0,255,0))

img.draw_line(blob.min_corners()[0][0], blob.min_corners()[0][1], blob.min_corners()[1][0], blob.min_corners()[1][1], color=(0,0,255))

img.draw_line(blob.min_corners()[1][0], blob.min_corners()[1][1], blob.min_corners()[2][0], blob.min_corners()[2][1], color=(0,0,255))

img.draw_line(blob.min_corners()[2][0], blob.min_corners()[2][1], blob.min_corners()[3][0], blob.min_corners()[3][1], color=(0,0,255))

img.draw_line(blob.min_corners()[3][0], blob.min_corners()[3][1], blob.min_corners()[0][0], blob.min_corners()[0][1], color=(0,0,255))

print(clock.fps())

Drawing the lines is kinda a pain right now. I’ll add two more draw methods to make this easier.

So, the corners value is the object corners. It’s always sorted from the left, top, right, and bottom. Min corners is then the min area rect. I was going to call it min_area_rect_corners() but that was too verbose.

Note that since the min area rect is generated by just a few points it’s quite jumpy. Set your thresholds well.

firmware.zip (918 KB)