Howdy!

We’re having a great time with your H7 cameras - they are fast and provide a lot of power for our small project.

Can you provide any guidance on how to differentiate between objects where the thresholds for one are a subset of another?

We’re using color tracking for an Orange ball on a 3 meter long green field. By itself, this works great and is almost 100% accurate at VGA resolution.

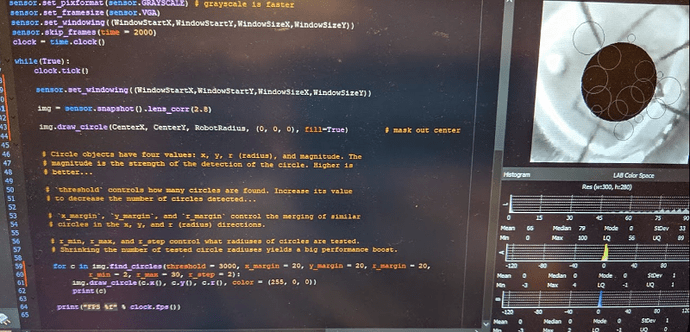

However, we have not been able to find a way to design non-overlapping thresholds for the Orange ball when we need to also track a Yellow target that the ball needs to be moved into - see histograms below.

We broke this into two separate find_blob calls so we could set different search parameters for the Orange ball versus the Yellow rectangular target. We also tried these together in a single find_blob call and extracted the blob values using the binary decoded blob.codes - but had the same results of being able to track the Yellow target well, but the ball in isolation only.

Sadly, we have no control over the lighting - so we need to be tolerant of slightly varying light levels and shadows.

Our code for finding the Yellow target is as follows, and is working well:

for blob in img.find_blobs([thresholds_Yellow], pixels_threshold=50, area_threshold=50, merge=True, margin=50):

Our code for finding the Orange ball is as follows, and also works well when the Yellow target is not present. Since we need to locate the ball at a 3 meter distance - where it appears as only a few pixels - we’ve set the pixels_threshold low.

This works well except when the Yellow target is around - the routine detects that.

for blob in img.find_blobs([thresholds_Ball], pixels_threshold=3, merge=True, margin=5):

We’ve tried many alternative blob properties to try to differentiate between the two objects including Roundess, Elongation, Compactness and Density - but have not been able to get any useful consistent readings. For example, Roundess numbers for the Yellow target often exceed those of the ball - perhaps either because the Orange Ball blobs are seldom ball-shaped since the light gradients on the ball make identifying the entire ball difficult and/or because of distortion in our optical field. Elongation values for the Yellow target are also all over the scale.

We had some success trying to ensure the histogram of the Orange ball was larger than the Yellow one - but this is not reliable. This code is likely a bad idea - just posted it here to show the desperate attempts we’ve made to try to solve this challenge.

r = blob.rect() # get the area of the image that the potential Orange ball blob occupies - so we can use the histogram function

histBall = img.get_histogram(roi=r) # get a histogram of the area

hi = histBall.get_percentile(0.99)

lo = histBall.get_percentile(0.01)

print(lo.a_value(), hi.a_value())

# Compare the hi/lo range of the ideal Ball with the observed ball and mark this is a 'best' candidate if appropriate

if (abs(abs(thresholds_Ball[3]-thresholds_Ball[2])-(abs(hi.a_value()-lo.a_value())))) < 15:

BestZ[IndexBall] = blob.pixels()

BestX[IndexBall] = blob.cx()

BestY[IndexBall] = blob.cy()

Any suggestions you can provide to help track the Orange ball when the Yellow target is around are greatly appreciated.

Thanks,