Hi,

working with the exapmples I noticed that when I disconnect the board the code loaded into the IDE is never present inside the main.py file ,so my doubt if what is see run on the IDE is mainly is the rusult of processing inside the IDE by the PC instead than on board of board.

In other words , if a want load for instance the example face_tracking and thend when the board is disconnected by my PC send by serial the center coordinate of a face , is it feasible ?

Do I’ve too copy the code into the main.py file and then it can works or I always need have the board connected to the PC to ha data elaborated.

Probably is one of the most stupid questions rised in the forum , but I was expecting that the code showed on the IDE was loaded into the board every time , while when I disconnect the board there is always the same basic script to switch on and of the leds. Thanks Robero

On my phone, so, short answer.

Click tools, and the then save to OpenMV cam. The IDE will then ask you if you want comments striped. Then go to tools and click reset OpenMV cam.

The board will now run the script you loaded onto it without the IDE.

Hi ,

with IDE 1.0.0 under tools there is the option that you say .

With Ide 1.5.0 the buttown download that appear at the start it doesn’t work, so I don’t understand if is just an allert or has to work as real download trigger.

Under tools there is just the option Bootloader . Is it this the option to load code on board ?

The ide don’t ask nothing about comments and btw , with this version I can’t connect at all the board , while with the version 1.0.0 can conncet.

I’m quite confused and I’ve no clear idea which is the right combination of firmware vs IDE version… ![]()

![]()

Hi, you are using the super old IDE. Please see our website and download the new IDE. Please see the downloads page on our website.

HI,

as suggested I’ve downloaded the ide from this link https://openmv.io/download/.

Now I’ve the ide 1.2 installed, and I hope that is the right one. On the board I’ve flashed the firmware 1.7 ( only the file openvm.df2 from OPENVM2 folder ).

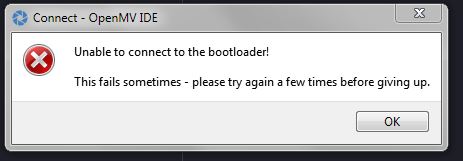

When i connect the board is recognised undec com9 port. When I start the ide and i try to connec the board first I got a popup that say that the IDE need upgrade the firmware of my board , when I say YES after few second the board is disconnected the reconnected again .At that point another popup say that isn able to to connect the bootloader and try again .After have tried many time and chenged the usb cable more than ones, flasshed also the firmware 1.6 but nothin change .

With the old firmare I never got this kind of problems , but every time there is some new bag … and I start really to lost confidence on this project ![]() .

.

Hi Bob63,

Yeah, it looks like anything’s never worked for you so I can imagine you’d think the system is bad. We’re kinda in alpha right now…

Anyway, I’ll have a new version of the IDE out in the next two weeks with improved bootloader code that should connect more often. However, the bootloader system we’re using has way to low of a serial timeout (500 ms) for it to connect to the host PC which is why it fails. So, the next version of the IDE also has DFU support built-in which will get around this issue.

As for increasing the serial timeout we’ll be doing that for the next revision of the OpenMV Cam hardware. The updated bootloader code in the IDE will only do so much.

So… I’ve attached a pre-release of the new IDE to this thread. The built-in DFU loader should kick in after the regular bootloader fails to connect. DFU bootloading should not fail ever. This version does not include the updated regular bootloader.

…

EDIT… looks like uploading the IDE via the forum fails. Give me a sec.

Good job guys !

Today after have downloaded the last version 1.3.0 of IDE from OpneMV download page and have upgraded the firmware to V 2.0.0 everything seems works fine.

Now I can start to play with this board ![]()

Thanks again . Roberto

Thank you. It took a lot of work to get it to that point. We will have a huge KickStarter update about all our progress soon.

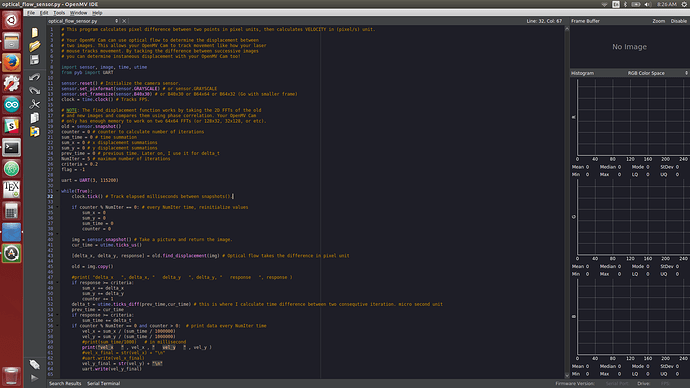

Hello I use OpenMV Cam M7 V1. This is the code I wrote.

##############################################################################

import sensor, image, time, utime

from pyb import UART

sensor.reset() # Initialize the camera sensor.

sensor.set_pixformat(sensor.GRAYSCALE) # or sensor.GRAYSCALE

sensor.set_framesize(sensor.B40x30) # or B40x30 or B64x64 or B64x32 (Go with smaller frame)

clock = time.clock() # Tracks FPS.

old = sensor.snapshot()

counter = 0 # counter to calculate number of iterations

sum_time = 0 # time summation

sum_x = 0 # x displacement summations

sum_y = 0 # y displacement summations

prev_time = 0 # previous time. Later on, I use it for delta_t

NumIter = 5 # maximum number of iterations

criteria = 0.2

flag = -1

uart = UART(3, 115200)

while(True):

clock.tick() # Track elapsed milliseconds between snapshots().

if counter % NumIter == 0: # every NumIter time, reinitialize values

sum_x = 0

sum_y = 0

sum_time = 0

counter = 0

img = sensor.snapshot() # Take a picture and return the image.

cur_time = utime.ticks_us()

[delta_x, delta_y, response] = old.find_displacement(img) # Optical flow takes the difference in pixel unit

old = img.copy()

#print( "delta_x ", delta_x, " delta_y ", delta_y, " response ", response )

if response >= criteria:

sum_x += delta_x

sum_y += delta_y

counter += 1

delta_t = utime.ticks_diff(prev_time,cur_time) # this is where I calculate time difference between two consequtive iteration. micro second unit

prev_time = cur_time

if response >= criteria:

sum_time += delta_t

if counter % NumIter == 0 and counter > 0: # print data every NumIter time

vel_x = sum_x / (sum_time / 1000000)

vel_y = sum_y / (sum_time / 1000000)

#print(sum_time/1000) # in millisecond

print("vel_x " , vel_x , " vel_y " , vel_y )

#vel_x_final = str(vel_x) + “\n”

#uart.write(vel_x_final)

vel_y_final = str(vel_y) + “\n”

uart.write(vel_y_final)

########################################################################################

I use linux and my IDE version is (openmv-ide-linux-x86_64-1.7.1). I can see “Save script to OpenMV” and “Reset OpenMV” and I do that, but it doesn’t work. I also did that in Windows, but didn’t work. It seems that it does save it, but when I power the OpenMV by “Vin” pin, it doesn’t seem to run the code. I don’t get data on the other board that is connected to OpenMV by UART. Is it because it doesn’t get enough current in the pin or something else?

Thanks

Hi, please repost your code using the code tags. You can click the code button in the form editor to add those tags. Also, can you tell me if your code works in OpenMV IDE. It should run in OpenMV IDE fine.

If possible, narrow down the error to something specific. It’s more or less impossible for me to help you if you just ask me to find the error. Please try to narrow down the problem to a specific question and then I can answer it.

Ok, thanks for your advice. The code that I wrote is here.

import sensor, image, time, utime

from pyb import UART

sensor.reset() # Initialize the camera sensor.

sensor.set_pixformat(sensor.GRAYSCALE) # or sensor.GRAYSCALE

sensor.set_framesize(sensor.B40x30) # or B40x30 or B64x64 or B64x32 (Go with smaller frame)

clock = time.clock() # Tracks FPS.

old = sensor.snapshot()

counter = 0 # counter to calculate number of iterations

sum_time = 0 # time summation

sum_x = 0 # x displacement summations

sum_y = 0 # y displacement summations

prev_time = 0 # previous time. Later on, I use it for delta_t

NumIter = 30 # maximum number of iterations

criteria = 0.2

flag = -1

uart = UART(3, 115200)

while(True):

clock.tick() # Track elapsed milliseconds between snapshots().

if counter % NumIter == 0: # every NumIter time, reinitialize values

sum_x = 0

sum_y = 0

sum_time = 0

counter = 0

img = sensor.snapshot() # Take a picture and return the image.

cur_time = utime.ticks_us()

[delta_x, delta_y, response] = old.find_displacement(img) # Optical flow takes the difference in pixel unit

old = img.copy()

#print( "delta_x ", delta_x, " delta_y ", delta_y, " response ", response )

if response >= criteria:

sum_x += delta_x

sum_y += delta_y

counter += 1

delta_t = utime.ticks_diff(prev_time,cur_time) # this is where I calculate time difference between two consequtive iteration. micro second unit

prev_time = cur_time

if response >= criteria:

sum_time += delta_t

if counter % NumIter == 0 and counter > 0: # print data every NumIter time

vel_x = sum_x / (sum_time / 1000000)

vel_y = sum_y / (sum_time / 1000000)

print("vel_x " , vel_x , " vel_y " , vel_y )

#vel_x_final = str(vel_x) + "\n"

#uart.write(vel_x_final)

vel_y_final = str(vel_y) + "\n"

uart.write(vel_y_final)

I can run the code in IDE, and there’s no problem with that. I have another board, and I send data(vel_x and vel_y) to it through UART. When I run the code in IDE, I can read data in the second board. My problem is that I don’t want to run the code every time in IDE. I want to save it to the board and when I power it, I expect it to run the code (So I don’t need IDE anymore). I saw that you said to save the code and reset the OpenMV in tools. I did that. Then I disconnected OpenMV from my laptop. This time I powered it again without running IDE, I connected it to the second board(I’m sure my UART connection is correct, I checked it numerous times), but I was not able to read anything.

I also updated boot loader firmware from the bottom line in IDE, and I followed the steps, and it shows me that firmware is the latest version now. But I still have to run the code in IDE to able to see data in the second board.

Thanks

I also have a second problem.

I am working on a project. I want to mount OpenMV Cam M7 V1 on a quadcopter to give me velocity of the aircraft (I mount the camera downward). On documentation it says that “image.find_displacement()” gives the displacement in pixels. Based on this displacement and “delta_t”, I can get velocity. Later, I divide displacement by “delta_t” to get velocity. When the camera is fixed and not moving it gives 0.2 pix/s velocity for x and zero for y direction. How accurate is “image.find_displacement()”? My second question is this. Since I need velocity in m/s, do you know how to convert it from pixel/s to m/s? I have a downward sensor to measure height of the quadcopter.

I tested my code on the aircraft to see how it works. It was giving me random data in pixel/s. It was all random, not even noisy data. All random, between -1 to 7 pixel/s (0.01, -0.123, 1.234, 0.238, etc).

I haven’t used the algorithm for find displacement in a while. The last time I used it the code for it worked kinda but I would not use that feature right now until it gets fixed. The algorithm does indeed work but is too fragile currently and I need to spend some time making it more robust.

As for the script not running. That shouldn’t be an issue. I’m wondering if you’re running into the timeout char problem. Can you try out the mavlink Apriltag uart script and see if that runs on the board? It should work without any issue. Please use the tools, save script to openmv cam, followed by the tools reset OpenMV cam command and you should see serial data.

I’m been using my OpenMV cam for doing dyi robo car races lately and have been using the serial port and I know all this stuff works. I’m thinking you may need to add the timout_char=1000 argument to the UART call.

Thanks for your message. I don’t think you read my question at all. I said code I wrote is running great, and I can receive data from the second board through UART. My question is this, I save script on OpenMV and then I reset theboard in tools. Then I disconnect the camera from my laptop and connect it to the second board which is powered on. But I don’t get any data when I run minicom in the second board? Is my procedure wrong or there’s something else that I need to do? BTW, I use linux. Also is there any other function other than “find_displacement” to use optical flow?

Please read my question and previous questions. I don’t want to come back here and ask same question over and over again.

Thanks for your message. I don’t think you read my question at all. I said code I wrote is running great, and I can receive data from the second board through UART. My question is this, I save script on OpenMV and then I reset theboard in tools. Then I disconnect the camera from my laptop and connect it to the second board which is powered on. But I don’t get any data when I run minicom in the second board? Is my procedure wrong or there’s something else that I need to do? BTW, I use linux. Also is there any other function other than “find_displacement” to use optical flow?

Please read my question and previous questions. I don’t want to come back here and ask same question over and over again.

Your tone is not appreciated. I don’t have time generally during the week to sit down and test folks code. I answer most of the forums posts on my phone and try to provide what knowledge I can while not in front of my OpenMV work computer.

…

Anyway, I tested your code on my PC and it works while running from the IDE with a serial terminal connected. I then did: “Save Script to OpenMV Cam” followed by “Reset OpenMV Cam” and the script continued to output the data to the serial terminal on the PC. Note I’m using the latest firmware along with OpenMV IDE v1.7.1.

I then connected the serial output to an LCD screen and it continued to work. Finally, I removed the camera totally from USB power and have it running off of 5v from a bread board power supply attached to the LCD screen. Note that I was using a simple serial LCD that just has power/gnd/serial wires.

Can you describe how the camera is sending data to the second board? Do both boards share a common ground?

…

As for optical flow try this code out:

# Optical Flow Example

#

# Your OpenMV Cam can use optical flow to determine the displacement between

# two images. This allows your OpenMV Cam to track movement like how your laser

# mouse tracks movement. By tacking the difference between successive images

# you can determine instaneous displacement with your OpenMV Cam too!

import sensor, image, time, math

sensor.reset() # Initialize the camera sensor.

sensor.set_pixformat(sensor.GRAYSCALE) # or sensor.GRAYSCALE

sensor.set_framesize(sensor.B64x64) # or B40x30 or B64x64

clock = time.clock() # Tracks FPS.

# NOTE: The find_displacement function works by taking the 2D FFTs of the old

# and new images and compares them using phase correlation. Your OpenMV Cam

# only has enough memory to work on two 64x64 FFTs (or 128x32, 32x128, or etc).

old = sensor.snapshot()

while(True):

clock.tick() # Track elapsed milliseconds between snapshots().

img = sensor.snapshot() # Take a picture and return the image.

[delta_x, delta_y, response] = old.find_displacement(img)

delta_x = 0 if math.isnan(delta_x) else int(delta_x)

delta_y = 0 if math.isnan(delta_y) else int(delta_y)

old = img.copy()

print("%6f X - %6f Y - %6f QoR - %6f FPS" % \

(delta_x, delta_y, response, clock.fps()))

You should clearly see the camera not outputting random noise but movement data when you move the camera to the left or right, up or down. The raw output from find displacement is super noisy since it’s very precise. The above int() call on the deltas removes a lot of the noise.

As for converting pixels to velocity, the best thing to do for this would be to just measure the amount of pixel deltas accumulated while moving and then compare that to the distance traveled given the time. This will give you the conversion factor you need. However, such conversion factor is only valid for a certain height. As the height changes the conversion factor will change.

If you want to actually do the math… then you can. So, you’ll need to grab the camera’s focal length from our webpage along with the pixel size on the camera.

Here’s that info:

lens_mm = 2.8 # Standard Lens.

lens_to_camera_mm = 22 # Standard Lens.

sensor_w_mm = 3.984 # For OV7725 sensor - see datasheet.

sensor_h_mm = 2.952 # For OV7725 sensor - see datasheet.

x_res = 160 # QQVGA

y_res = 120 # QQVGA

f_x = (lens_mm / sensor_w_mm) * x_res

f_y = (lens_mm / sensor_h_mm) * y_res

c_x = x_res / 2

c_y = y_res / 2

h_fov = 2 * math.atan((sensor_w_mm / 2) / lens_mm)

v_fov = 2 * math.atan((sensor_h_mm / 2) / lens_mm)

Here’s a write up on the math:

http://digital.ni.com/public.nsf/allkb/1BD65CB07933DE0186258087006FEBEA

You will of course need some type of distance sensor to detect the height.

Thanks for the reply. I apologize if my tone wasn’t good.

I use UART from the camera to the second board to send data. I do the following steps:

- Connect the camera to PC

- Open IDE and go to the code

- Connect the camera to IDE from the bottom left button

- Run the code to make sure it works

- stop running the code (camera still connected from bottom left button)

- Go to tools and save the code to the camera

- Click yes from the pop up window to remove comments from the code

- Go to tools and reset the camera (When I reset the camera, it shows that it’s disconnected from PC. I don’t know why)

- Remove the camera from PC and power it from the usb of the second board

- Run “sudo stty -F /dev/ttyS4 115200” followed by “sudo cat /dev/ttyS4”, but I don’t see any data (baudrate and port number are correct)

I currently use another camera with OpenCV installed on the second board, but it would be better if it was possible to do that with OpenMV cam.

Maybe you don’t have permissions to write to OpenMV storage (flash or SD card).

Try this:

- Save the script from the IDE like you do.

- Reset the cam, wait for it to mount the storage.

- cat main.py see if it changed at all.

Other solution, just edit main.py and put your code there.

Hi, can you verify that the script was written to the camera and maybe blink and LED to know that your script is running once disconnected from your PC?

You should be able to see you script on the SD card.