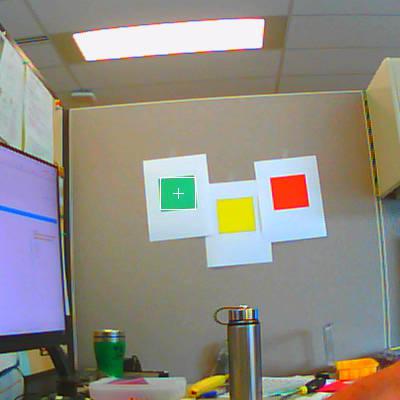

I worked on this yesterday and got it working to detect a certain green square on the wall roughly 6 feet from the camera. I had to fiddle with the thresholds a bit, but the technique of selecting the target color patch with the mouse and using the LAB Color Space histograms makes it easy. I am having some trouble getting the exposure the way I want though. I’ll detail that in another post. So far, I find the patch of green on the wall and output how many pixels it is to the left or right or if it is in the center zone (10 pixels wide). The next step is to put in the code for the pan/tilt servos to center the patch horizontally. I’m not sure yet how to do a PID loop on each axis (sounds like I’m tuning my 3D printer), so I’ll research that.

I attached a picture of what the camera sees.

Here is my code.

# My adaptation of the Single Color RGB565 Blob Tracking Example to detect a single green square

#

# Scott Murchison

import sensor, image, time, math, utime

threshold_index = 2 # 0 for red, 1 for yellow, 2 for green, 3 for blue

# Color Tracking Thresholds using Lab color model (L Min, L Max, A Min, A Max, B Min, B Max)

thresholds = [(30, 100, 15, 127, 15, 127), # red_thresholds (30, 100, 15, 127, 15, 127)

(70, 100, -30, 30, 60, 90), # yellow_thresholds (70, 90, -30, 30, 60, 90)

(75, 90, -60, -40, 0, 30), # green_thresholds (30, 100, -64, -8, -32, 32)

(20, 50, -20, 20, -45, -10)] # blue_thresholds (0, 30, 0, 64, -128, 0)

sensor.reset()

sensor.set_pixformat(sensor.RGB565)

sensor.set_framesize(sensor.VGA)

sensor.set_windowing((400,400))

sensor.skip_frames(time = 1000)

sensor.set_auto_gain(False) # must be turned off for color tracking

sensor.set_auto_whitebal(False) # must be turned off for color tracking

sensor.set_auto_exposure(False)

sensor.set_brightness(0) # range 0-31

sensor.set_saturation(3) # range -3 to 3

sensor.set_gainceiling(2) # 2,4,8,16,32,64,128

sensor.set_contrast(-1) # range -3 to 3

clock = time.clock()

# Only blobs that with more pixels than "pixel_threshold" and more area than "area_threshold" are

# returned by "find_blobs" below. Change "pixels_threshold" and "area_threshold" if you change the

# camera resolution. "merge=True" merges all overlapping blobs in the image.

while(True):

clock.tick()

img = sensor.snapshot()

for blob in img.find_blobs([thresholds[threshold_index]], pixels_threshold=100, area_threshold=100, merge=False):

# These values are stable all the time.

img.draw_rectangle(blob.rect())

img.draw_cross(blob.cx(), blob.cy())

# Extract the x,y coordinates of each color

coordinates = (blob.cx(), blob.cy())

print("X=%d Y=%d" % coordinates)

x_coord = blob.cx()

y_coord = blob.cy()

if x_coord < 195:

h_offset = (200 - blob.cx())

print ("patch is %d pixels left of center" % h_offset)

elif x_coord > 205:

h_offset = (blob.cx() - 200)

print ("patch is %d pixels right of center" % h_offset)

else:

print ("patch is in center region")

# insert a delay

utime.sleep_ms(1000)